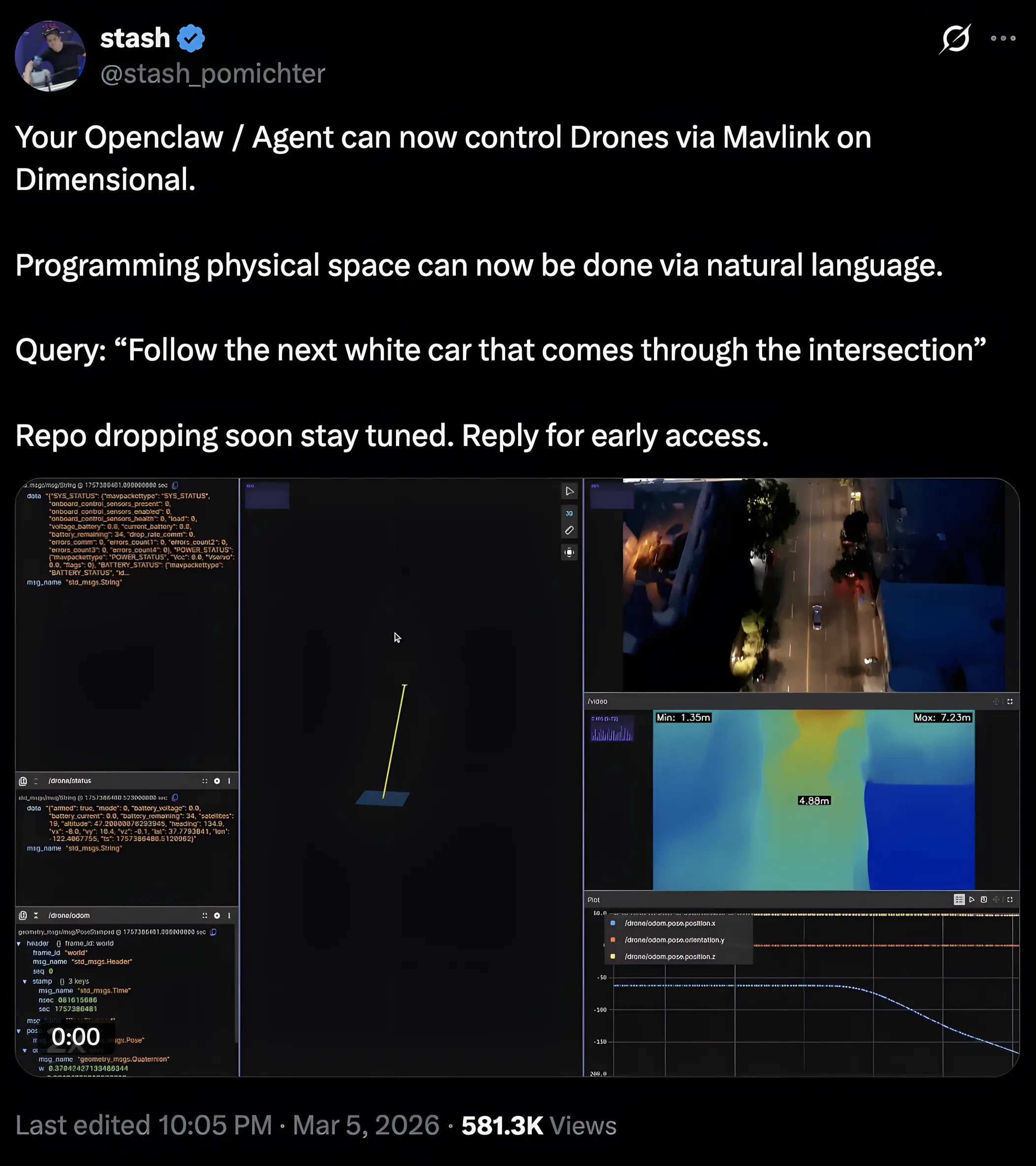

These days, there's no shortage of unsettling content on Twitter, so the bar for something stopping me mid-scroll is much higher than it used to be. This week, I was pulled in by a clip of a drone hovering over a live city intersection at night, tracking a white car through traffic on just a single typed sentence. It was posted by a developer as a product announcement and quote-tweeted into my feed by @AISafetyMemes, who was alarmed about surveillance and malicious activity. And yes, they have legitimate concerns, many of which are worthy of their own conversations.

But my brain went somewhere else first.

This isn't really a story about one developer or one clip. It's about where we've landed, almost without noticing, at the intersection of AI capability and physical consequence. Language models, autonomous agents, the whole accelerating tech stack, have made things possible faster than anyone with rulemaking authority has been able to respond to. Now, drones are just another instance where that gap stops being abstract.

Drones share airspace with manned aircraft. They fly over people. When something goes wrong, it goes wrong over a neighborhood.

Who I Am and Why It Matters Here

What makes that clip land differently depending on who's watching is the combination of what you know about the technology and what you know about the airspace. Most people have one or the other. Having both, a background in computational linguistics and machine learning on one side, an FAA Part 107 certification and years of operational flight on the other, means the clip reads in two registers at once. The NLP implementation is genuinely impressive. And the flight was, by any reasonable reading of 14 CFR Part 107, not legal to conduct.

What I saw was a drone operating beyond visual line of sight, over moving traffic, at night, without any indication the operator had met the requirements these conditions carry.

Three distinct regulatory categories. One eleven-second clip.

Real Footage or AI?

It's worth saying upfront: whether that footage was a live flight or a high-fidelity simulation is genuinely unclear. Dimensional markets itself as an open-source OS for physical space, built for real robot and drone control via natural language, and the interface shown is consistent with both real MAVLink telemetry and the kind of software-in-the-loop simulation developers use for testing. The developer didn't present it as a simulation; they presented it as a capability demonstration for something people are already signing up for early access to.

Here's the point: if it was a live flight, the violations happened. If it was a simulation, the intent is real flight and the regulatory blindspot is identical either way. The FAA hasn't ever distinguished between a demo and a deployment when an aircraft is in shared public airspace, and it isn't going to start because the instruction came from a language model.

What the Violations Actually Mean

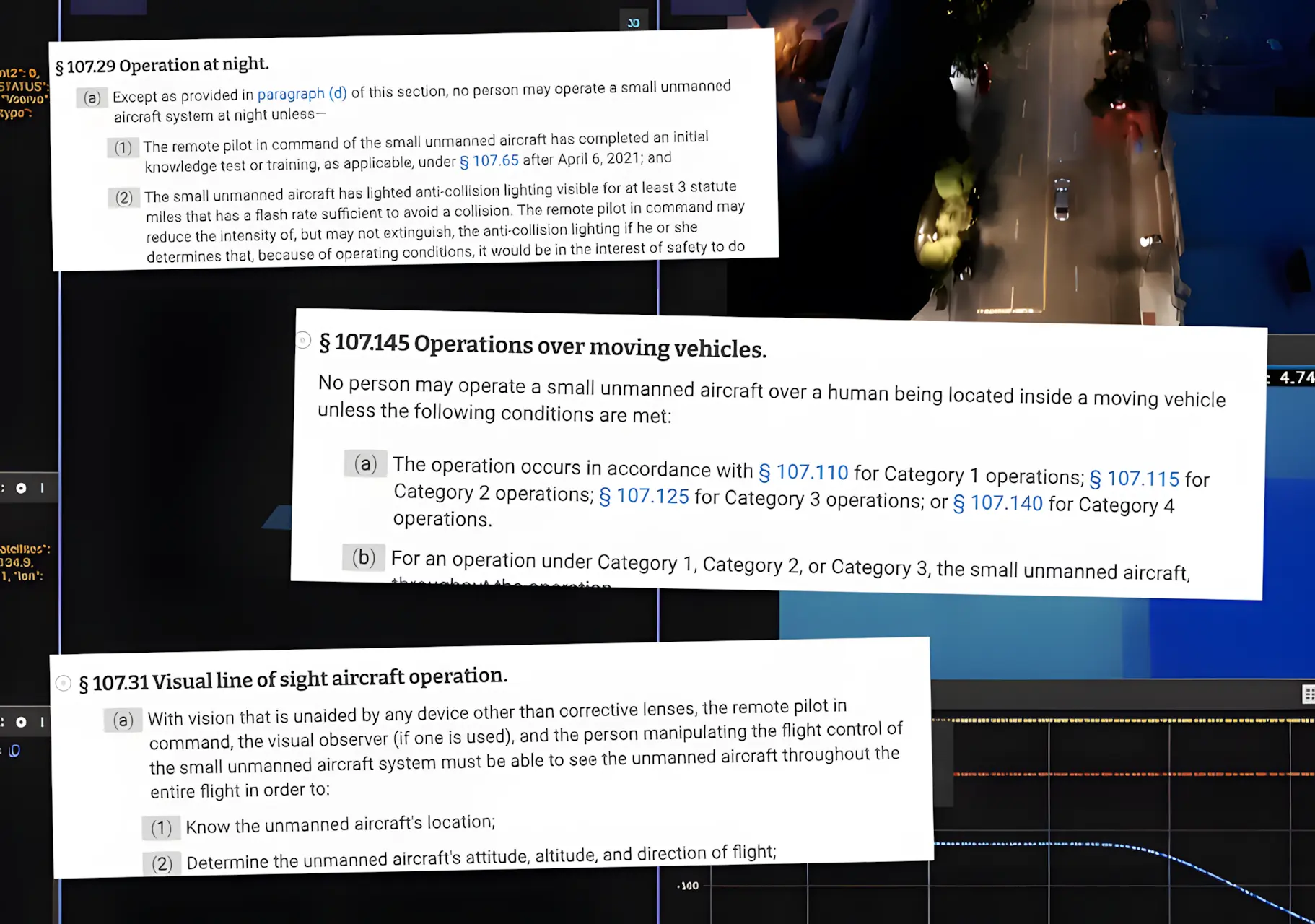

Under Part 107.31, a remote pilot must maintain visual line of sight with their aircraft at all times, unaided by any device other than corrective lenses. A camera feed doesn't satisfy that requirement. This rule exists because a screen puts a layer of abstraction between the pilot and the physical consequences of what the aircraft is doing in real space, and that abstraction is exactly what makes autonomous tracking over a live urban environment so dangerous.

The intersection clip raises violations on two fronts: Part 107.39 covers pedestrians and anyone not sheltered in a stationary vehicle, while Part 107.145 addresses the vehicles themselves: permitting operations over moving vehicles only within closed or restricted-access sites, or when the aircraft does not maintain sustained flight over them. Autonomously tracking a car through traffic is, by definition, sustained flight over a moving vehicle.

Nighttime operations carry their own requirements under Part 107.29, including anti-collision lighting visible for at least three statute miles. We can't be sure this criterion has been met in the clip, and BVLOS waivers, which this operation would almost certainly require, aren't casually obtained; they involve detailed safety cases, operational risk assessments, and review timelines that don't move at the pace of a product announcement.

Never Crossing The Bridge

This is where the NLP piece becomes the story, and it's worth being precise about what happened technically, because the developer behind this isn't a clueless hobbyist. Architecting an agentic system that routes natural language instructions through MAVLink and controls a drone autonomously requires genuine technical sophistication. It's exactly what makes the clip a more useful exhibit than a random person flying a Mavic over a crowd, because the regulatory blindspot here isn't a product of ignorance. It's a product of what NLP tooling does to the felt relationship between competence and capability.

The model parsed a plain English instruction, translated it into flight commands, and the aircraft executed cleanly. From inside that experience, it probably feels like mastery. What it doesn't feel like, and what the interface has no mechanism to surface, is the body of knowledge that has nothing to do with whether the system works, and everything to do with whether it's legal to run.

Technical competence and regulatory competence don't transfer between each other, and that gap is precisely what Part 107 exists to address. The knowledge exam tests:

Airspace classifications

Weather minimums

Right-of-way rules

The conditions under which flight over people is permitted

What a waiver requires and how long the process actually takes

Those are entirely separate domains, and a clean natural language interface does nothing to bridge them. Instead, it makes the gap invisible to the person who most needs to see it.

Autopilot in manned aviation is a useful comparison here, because you still need a license to engage it. The abstraction never removed the accountability, it simply made certain tasks easier within a framework the operator already had to demonstrate competency in. What's being built here inverts that entirely.

The Gap Is Widening Faster Than the Rules Can Follow

The FAA's rulemaking has always lagged behind the technology, and that's not new to anyone working in commercial drone operations. We've watched it through the rollout of Remote ID, through the slow development of BVLOS frameworks, through every iteration of the Low Altitude Authorization and Notification Capability (LAANC) system. What's different now is the rate of acceleration.

It used to require enough technical friction that developers at minimum bumped into the regulatory surface area along the way; someone building a custom MAVLink stack is going to encounter airspace concepts whether they go looking for them or not.

A consumer typing a prompt into a text box is not, and my estimate is that the consumer product version of this is eighteen months away, maybe less. The repo the developer mentioned dropping publicly is the bridge between "a sophisticated person built this" and "anyone can run this," and the current enforcement infrastructure isn't built for that volume.

The drone industry has seen contained versions of this before:

Unlicensed real estate photographers operating commercially without Part 107 certification

Hobbyists in restricted airspace around stadiums and airports because nothing in the experience of buying a drone suggested they couldn't be there

Those incidents were manageable because the barrier to doing something technically sophisticated with a drone still existed. Autonomous AI-controlled flight via natural language prompt is a different category of accessible, and the question of who's responsible when something goes wrong doesn't get simpler when the instruction came from a model and the operator didn't fully understand what they were authorizing.

This Isn't About Stopping What's Being Built

None of this is an argument against what Dimensional is building, or against the developers who are clearly building it well. But the drone community has spent years trying to establish a culture of compliance against a market that never required it, fighting for enforcement at point of sale, for mandatory education, for Remote ID, for frameworks that make shared airspace legible to everyone using it. A natural language interface that executes flight commands without surfacing a single regulatory obligation doesn't just create new risk. It makes that work harder.

The people in that Twitter thread worried about Black Mirror aren't really wrong. But the threat isn't some distant dystopia; it's the compounding of a hundred small decisions being made right now, by capable people who never had to reckon with the rules they were crossing, because nothing in their toolchain asked them to. The FAA now has to decide whether it'll meet this moment with actual enforcement: fines, point-of-sale requirements, mandatory credentialing pathways. Or it can continue to lag and wait for something to force its hand.

I'd really rather it not be a crash.

As a company that operates commercially in this airspace, we've built our practice around these rules — not because compliance is easy, but because shared airspace requires it. For more on safe drone practices and community research, check out Suave Droning’s Terraflight Insights blog.

Connect with Our Team

-

Kelly Ortega - CEO | Suave Droning

Connect on LinkedIn -

Nicolo Cincotta - COO | Suave Droning

Connect on LinkedIn